Howdyy Hacklido Readers 🙌

I m back with Day 2 of AI Security series 😎

Why Classifying AI Systems is important for Security?

In Cybersecurity field, a web application has different risks than mobile. Similarly in AI also we have very different attack surfaces for chatbots, classifiers, recognition models etc.

so, before learning AI attacks directly we must learn AI types.

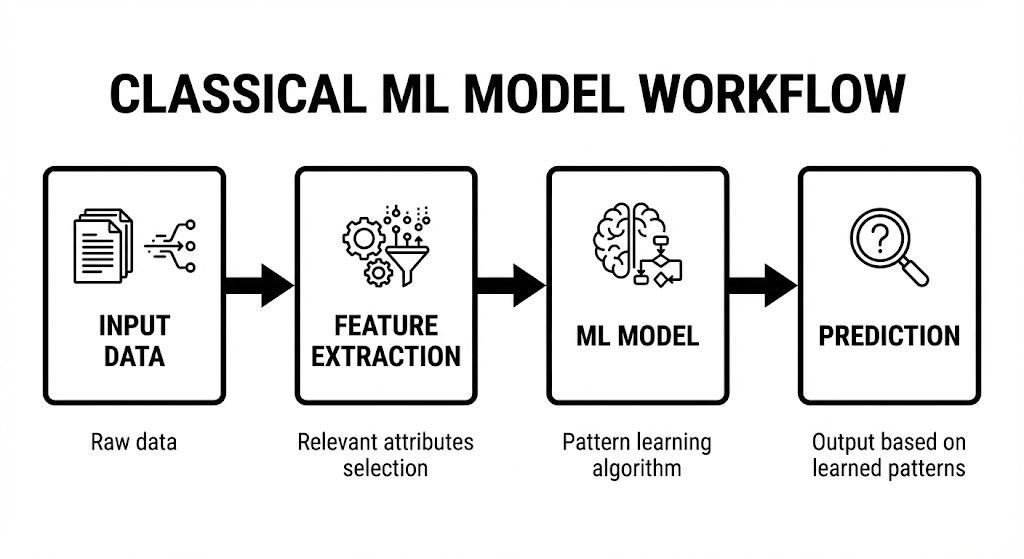

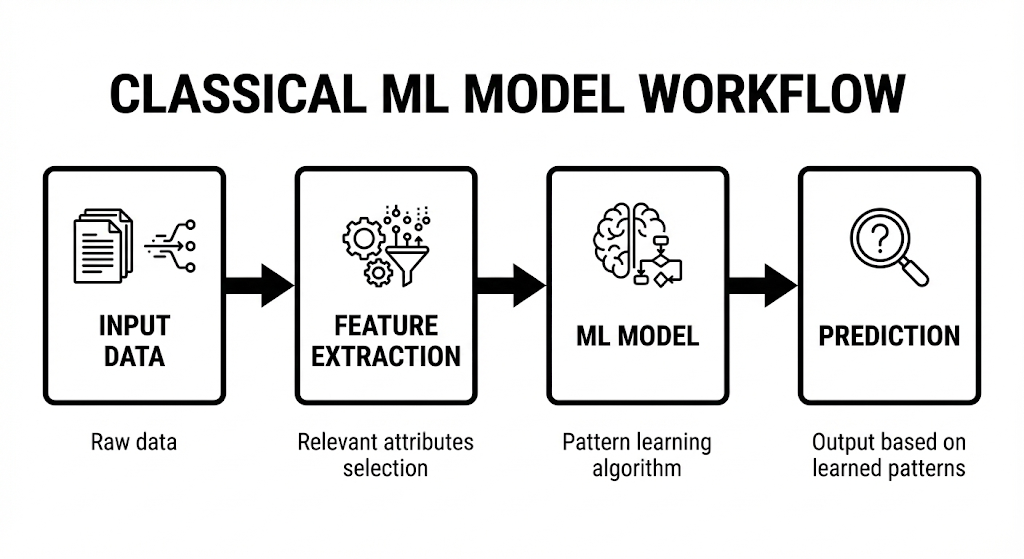

1. Classical Machine Learning Models

In simple terms, machine learning teaches model to learn from data and experience.

These are rule-based learning systems trained on structured data like numbers, categories.

Examples includes spam email detection, malware classification etc.

Common models includes:

- Linear Regression

- Logistic Regression

- Decision Trees

- Random Forest

- Support Vector Machines

Security Risks:

a. Data Poisoning

If attackers inject malicious data during training.

For Example: Spam emails labeled as “safe”.

The model learns the wrong behavior.

b. Evasion Attacks

Small input changes can bypass detection.

For Example: Attacker can add invisible characters to malware, this may lead to bypass.

c. Model Theft

Attackers query the model repeatedly and recreate it.

2. Deep Learning Systems

Deep Learning is brain inspired, neural networks to learn complex patterns from huge amounts of data.

It is usually used when data is large.

Examples includes speech recognition, face recognition etc.

Security Risks:

a. Adversarial Attacks

A small, tiny change in input can cause wrong predictions.

For Example : A it is extremely dangerous if the self-driving cars detect slightly modified “STOP” sign as “SPEED LIMIT”.

b. Model Opacity

It is usually hard to understand and explain model’s decisions.

Attackers usually love systems which humans can’t understand.

3. Vision Models

Vision Models are nothing but the models which can see and interpret images or videos, like Face recognition, Object Detection etc.

Security Risks:

a. Adversarial Images

Special glasses can be used to fool face recognition systems

b. Dataset Bias Exploitation

Poor performance on certain lighting, angles or demographics

c. Deepfake Injection

With the help of advanced techniques we can submit fake faces or documents as real one.

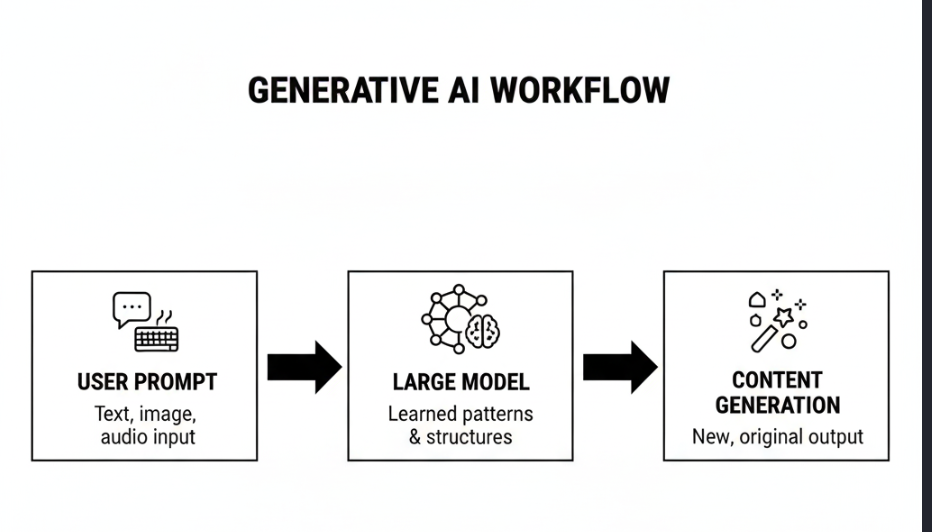

4. Generative AI

Which we are using daily to write assignments and cheat in quizzes [ChatGPT, Gemini, Deepseek etc].

Generative AI creates entirely new content rather than just analyzing existing information.

It can generate : Text, Images, Code etc.

Security Risks:

a. Prompt Injection Attacks

Attackers can manipulate prompts to bypass safety controls, leak internal instruction or override system rules.

Best Example : https://shorturl.at/XAXTl [youtube shorts]

b. Hallucinations

In today’s era, we don’t trust human being’s but we have blind trust on AI models. We ask it’s suggestion from career to personal relationships.

You can ask, what’s wrong in it?

In most of the case, AI confidently gives false information with illogical facts.

Another Best Example : https://www.bbc.com/news/articles/ce3xgwyywe4o

c. Data Leakage

Most of the Large Learning Models [LLMs] may leak training data, memorize sensitive data and reveal confidential information easily.

Key Takeaways

- AI security starts with understanding the model type.

- Not all AI systems are equally risky.

- GenAI is the most dangerous from a security perspective.

From next blog …We will formally start learning about AI Security 😉

Stay updated with new blogs of hacklido by joining Hacklido’s Telegram Group🤠

https://t.me/hacklido