Lets start our blog with a proverb

“Trust is the new currency, untrustworthy AI fails”.

If AI systems can make decisions, they can also be attacked, manipulated or misused.

1. WHAT is AI Security?

AI Security is the practice of protecting AI systems from attacks, misuse, manipulation and failures, while also ensuring that AI itself doesnot become a security threat.

In one line, AI Security = Security AI + Security with AI.

2. Why Traditional Cybersecurity is Not Enough?

The cybersecurity practices which were are following now, only focuses on servers, networks, applications and databases.

But AI systems introduces new attack surfaces like training data, ML models, prompts, model outputs etc.

That’s why AI security is a new domain, not just an extension of cybersecurity.

3. AI for Security VS Security for AI

At first glance, we all assume both “AI for Security” and “Security for AI” as same, but they are not.

AI for Security

Here, AI for Security means we are using AI to protect systems.

Just think “AI is helping humans do cybersecurity better”.

Examples :

AI detecting malware

AI spotting phishing emails

AI identifying network intrusions

Security for AI

Taking security measures for protecting AI systems themselves.

Just think “Hackers attacking the brain instead of the server”

Examples :

Poisoning training data

Attacking an AI model to misclassify images

Injecting malicious prompts into ChatGPT like systems

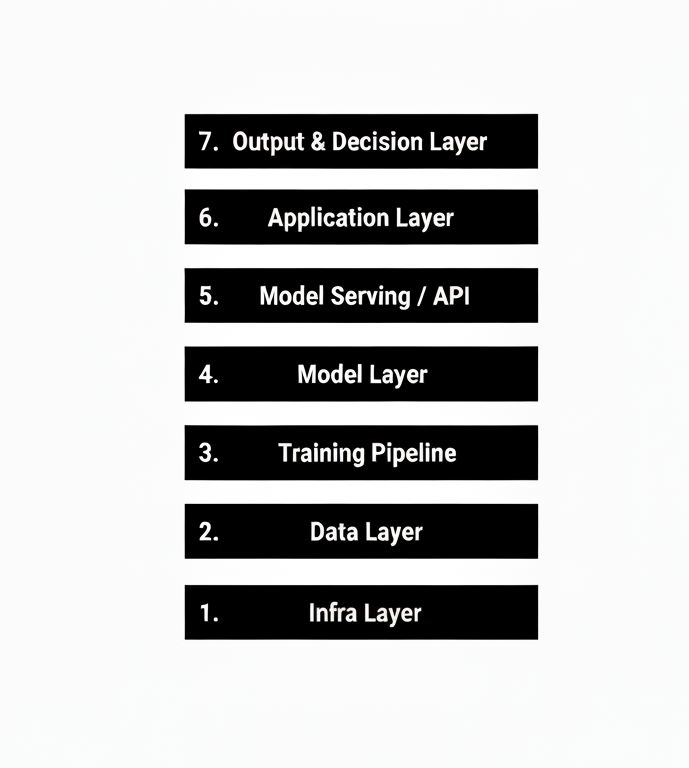

4. Threat Surface of AI Systems

Imagine threat surface is every place where an attacker can interact with or exploit a system.

AI systems have a much larger threat surface than normal software.

I know it is little overwhelming to grasp all the AI Security keywords in one day, we will try to understand each and every layer in depth day by day.

As of know, the main objective is to have an overview of Threat Surface Layers.

1. Infrastructure Layer

It includes cloud servers, GPUs/TPUs, storage buckets, containers and Kubernetes.

AI infra is expensive and powerful, making it a prime target.

Common Threats :

GPU hijacking (crypto mining)

Credential leakage

Misconfigured storage buckets

Example : Attackers steals API keys and uses your GPUs to train their own model.

2. Data Layer

It includes training data, validation/test data, user input data.

Common Threats :

Data poisoning

Label flipping

Backdoor injection

Example : Poisoning a face recognition dataset so one face is always misidentified.

3. Training Pipeline Layer

It includes data preprocessing scripts, feature engineering, training code.

Common Threats :

Malicious training scripts

Backdoored checkpoints

CI/CD manipulation

Example : A malicious library modifies training behavior silently.

4. Model Layer

Also known as Brain Layer

It includes architecture design, fine-tunes models, third-party models.

Common Threats :

Trojan triggers

Backdoored models

Model extraction

Example : A model behaves normally except when a specific pattern appears.

5. Model Serving / API Layer

It includes REST APIs, SDKs, Rate limiting logic

Common Threats :

Prompt abuse

Denial of Wallet

API key abuse

Example : Attacker sends thousands of queries to reconstruct your model

6. Application Layer

Where user interacts with AI system.

It includes web apps, chatbots, mobile apps etc

Common Threats :

Prompt Injection

Indirect prompt injection

Context Manipulation

Example : Hidden text in a webpage alters the chatbot’s response

So.. guys thats it for today

See you on Day 4 😉