The privacy-first promise of local AI has hit a major roadblock. A critical heap out-of-bounds read vulnerability, codenamed "Bleeding Llama," has been disclosed in Ollama, the world’s most popular framework for running Large Language Models (LLMs) on local hardware.

Tracked as CVE-2026-7482 with a staggering CVSS score of 9.3, the flaw allows unauthenticated, remote attackers to exfiltrate the entire process memory of an Ollama server. With roughly 300,000 instances currently exposed to the public internet, the risk to proprietary code, API keys, and private conversations is catastrophic.

1. Technical Deep Dive: The "Unsafe" Performance Trade-off

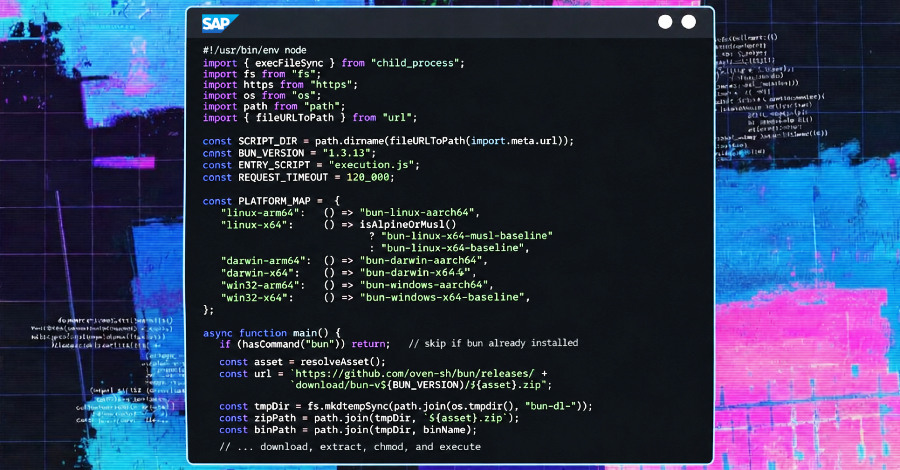

The vulnerability highlights a dangerous trend in AI development: trading memory safety for speed. Ollama is written in Go, a language typically praised for its safety. However, to optimize the GGUF model loader, developers utilized the unsafe package—effectively bypassing Go's memory protections.

- The GGUF Exploit: Attackers can upload a specially crafted GGUF file through the /api/create endpoint. By declaring a "tensor size" significantly larger than the actual data provided, they force the server to read past its intended buffer.

- Lossless Exfiltration: Using a clever mathematical trick—converting F16 to F32 during the read—attackers ensure the stolen heap data remains perfectly readable. The sensitive memory lands on the attacker's disk exactly as it existed in the server's RAM.

- The "Push" Vector: Once the memory is leaked into a new model artifact, attackers can use the built-in /api/push feature to silently send the data to a server under their control.

2. The Blast Radius: What’s Being Leaked?

Because Ollama is often used as a shared backend for developer tools and internal assistants, the exfiltrated memory contains a "gold mine" of sensitive information:

- Agentic Data: Outputs and file contents from integrated tools like Claude Code.

- System Secrets: API keys, tokens, and credentials stored in environment variables.

- Intellectual Property: Proprietary code and internal contracts reviewed by AI models.

- User Privacy: Full chat histories and prompts from all concurrent users on the server.

Hacklido Intelligence: Emergency Defense Protocol

The "Bleeding Llama" incident proves that "local" does not automatically mean "secure." If your Ollama server is internet-facing without an authentication layer, you must assume it has already been compromised.

Strategic Defensive Steps:

- Immediate Update: Update to Ollama version 0.17.1 or later. This version contains a critical patch that enforces proper tensor size validation.

- Enforce Authentication: Ollama does not provide authentication by default. You must deploy an authentication proxy (like Nginx with Basic Auth) or an API gateway in front of all instances.

- Secret Rotation: If your server was exposed to the web, rotate all API keys, tokens, and environment credentials immediately. The exfiltration of these secrets is "silent" and leaves no typical traces.

- Audit Network Exposure: Check if your instance is listening on 0.0.0.0 (all interfaces). Re-bind it to 127.0.0.1 unless external access is strictly required and secured via VPN.

The Verdict: Bleeding Llama is the first "frontier-class" infrastructure bug of 2026. It serves as a stark reminder that as AI moves from the lab to production, the underlying frameworks must be hardened with the same rigor as traditional web architecture.